5. Mapping & Navigation

SLAM, AMCL, and autonomous navigation with ROS

Summary

Estimated time to completion: 2 hours

Simulated robot: Turtlebot 3 Burger

What will you learn with this unit?

- Simulating a robot with Gazebo and ROS

- Kinematic Models: Moving a robot by publishing on topics

- Creating a map of the simulated environment using 2D-SLAM

- Using the Advanced Monte Carlo Localization AMCL and the ROS map server

- Overview of autonomous navigation with 2D maps using the move base stack

Robot Simulation with Gazebo

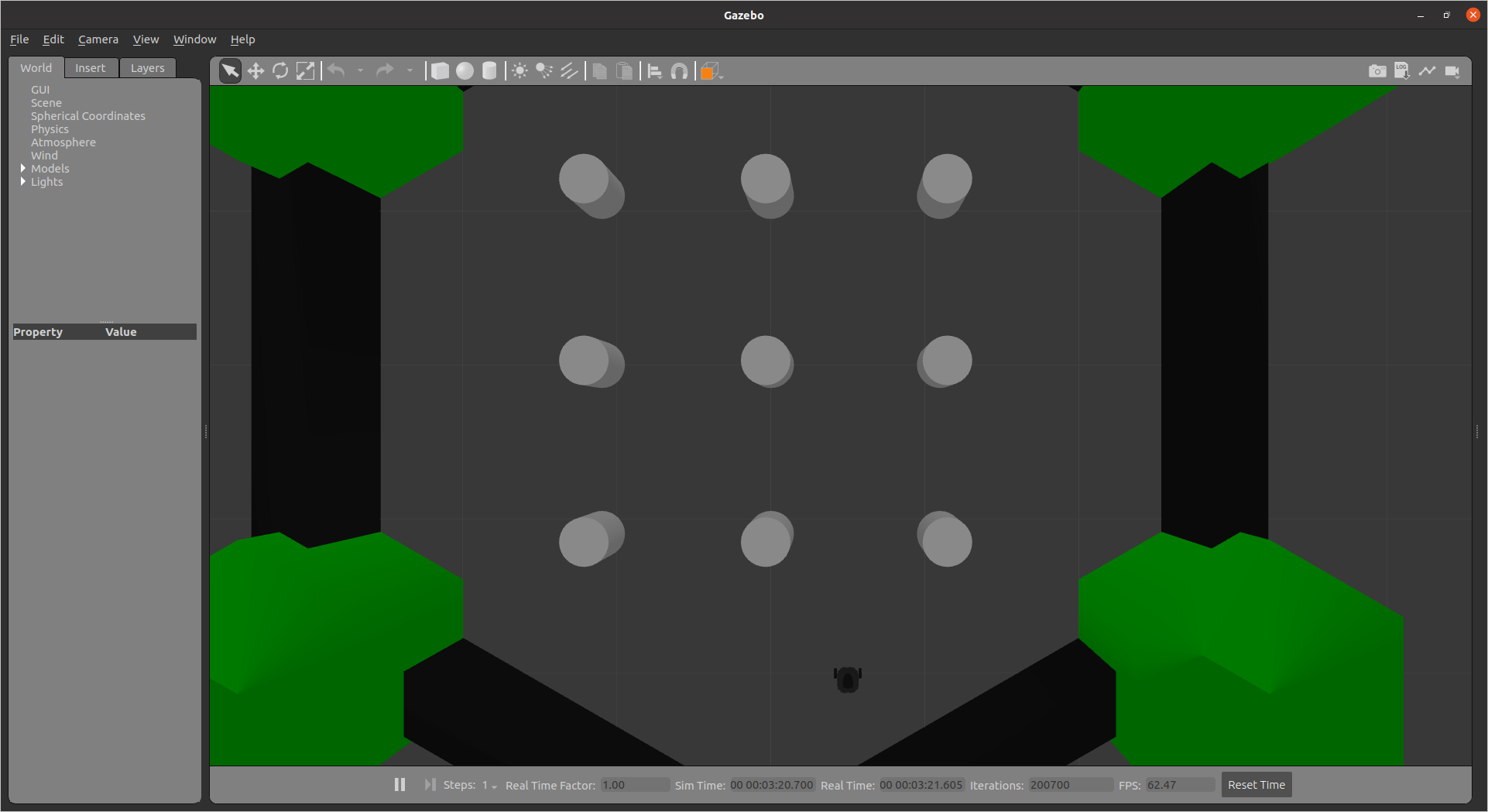

We start by launching the simulations provided by Robotis.

roslaunch turtlebot3_gazebo turtlebot3_world.launchNext, we need to bring up the coordinate frame transformations.

roslaunch turtlebot3_bringup turtlebot3_remote.launchYou should see the following window pop up:

Exercise [5 min]

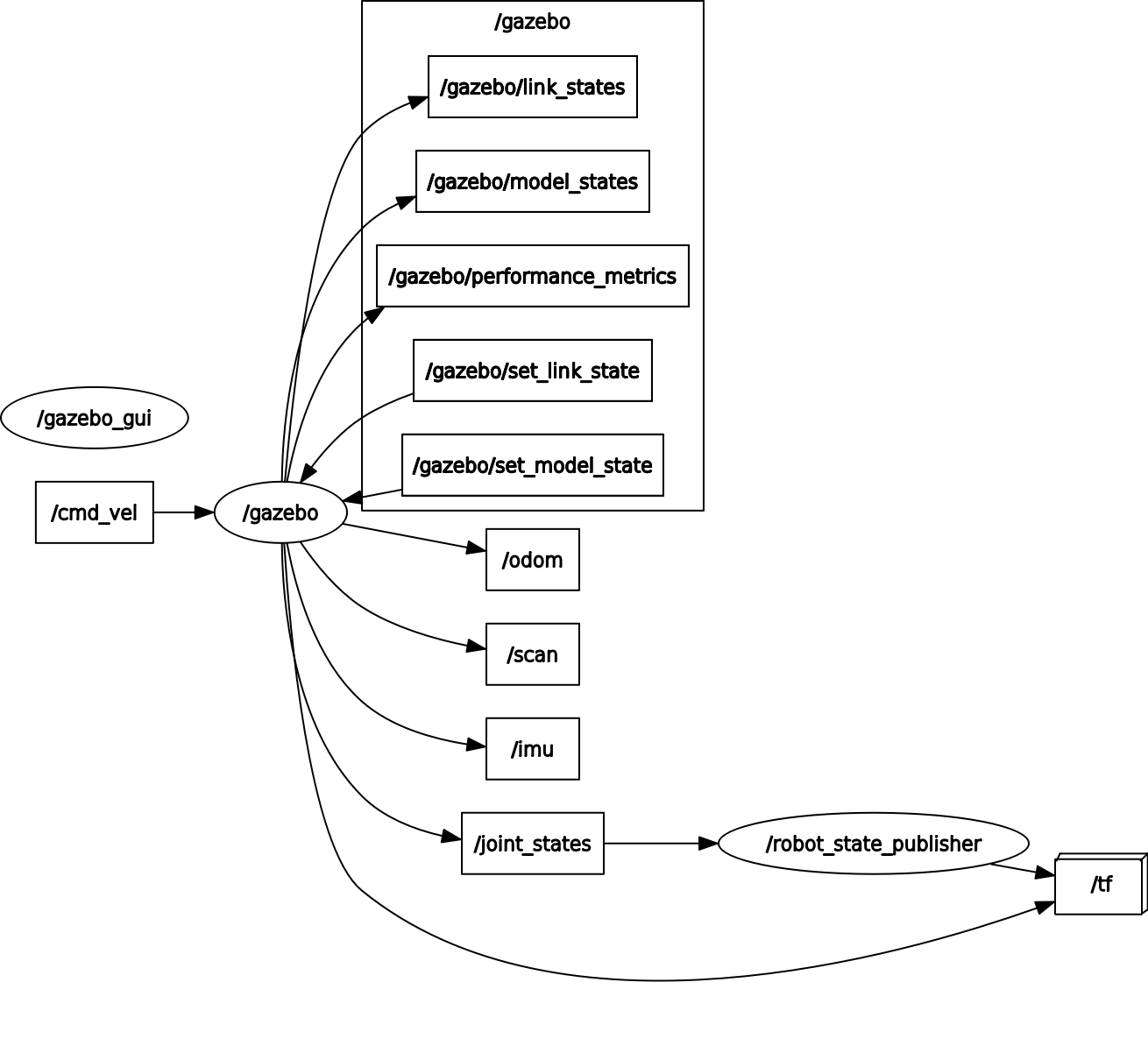

- Inspect the running nodes and topics

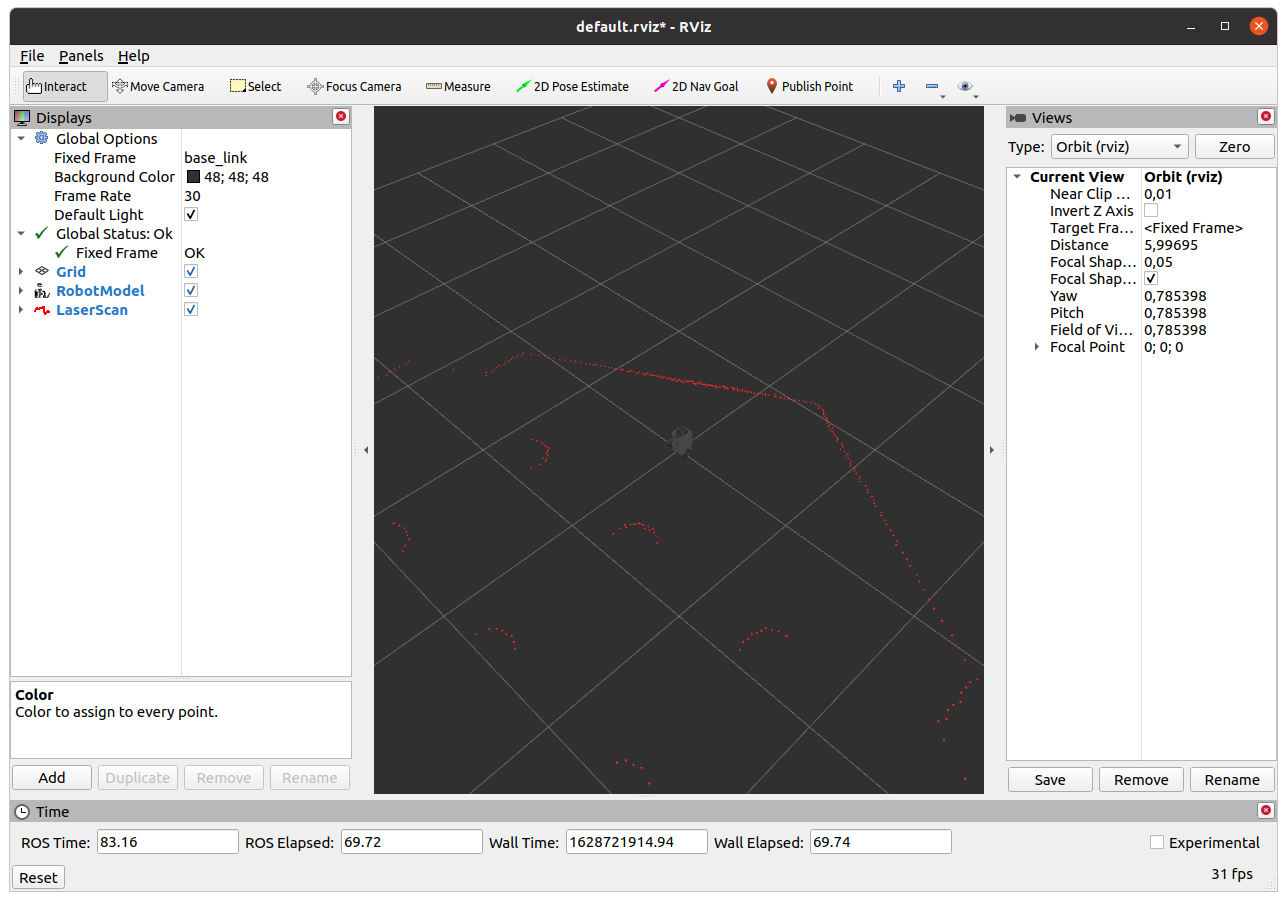

- Visualize the robot model and the laser scan data in Rviz

Moving a Robot by Publishing on a Topic

Mobile Robot Locomotion

We will now briefly discuss mobile robot locomotion and forward kinematics.

The term "robot locomotion" refers to the various strategies that robots use to move from one location to another. In this unit, we focus on wheeled mobile robots. Unlike industrial robots, mobile robots change their pose based on wheel motion. The pose of our robot in the world is thus a function over time and not a function of current angular joint values. The mathematics of forward kinematics (and inverse kinematics, as we will see in the next chapter) is similar to industrial robot kinematics.

The locomotion of mobile robots is based on kinematic assumptions summarized in a "model". In this unit, we analyze and derive kinematic models for mobile robots. Initially, we start considering the most simple mobile robot: a driven wheel.

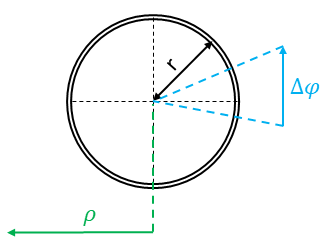

Consider a simple wheel on a plane. This wheel has a radius r. If the wheel rotates , the distance moved by the center of the wheel is . This can be described by the equation:

Where is the motion of the wheel center.

Simple wheel on a plane

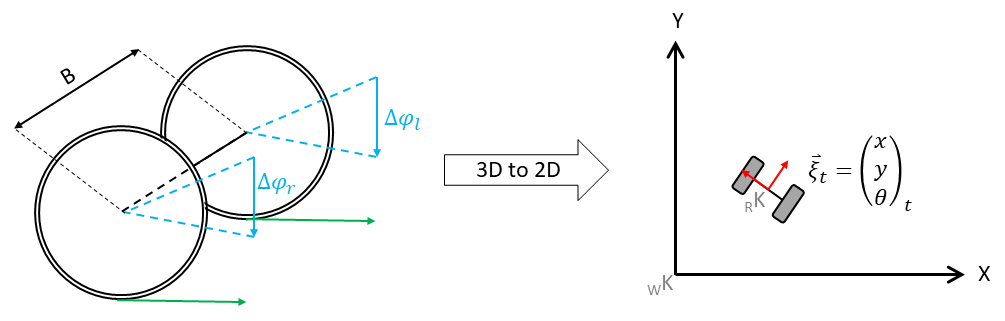

However, this mobile robot would be somehow useless because it cannot change the orientation. It can only move along the direction of . Thus, the next step is the extension of this simple wheel model: we add a second wheel and connect the wheels. This model is called a differential-driven mobile robot, and it is the most important model for mobile robotics. A 3D sketch of the model and a 2D simplification is visible in the figure below:

3D model (left) | Simplified 2D model (right)

In the figure on the left side, you see the 3D model. Two wheels are connected with a so-called baseline with length B. Notice that robots, like the one above, are differential mobile robots. This is only true if they have two differently controlled wheels that are in alignment. Based on the wheel movement and , the mobile device is now able to move on a plane. Since the third dimension is not used, a mobile robot is typically controlled on a 2D plane (the top view, see the right part of the figure above).

In the two-dimensional space, the mobile robot is controlled in a world coordinate system/frame .

The pose

at time t is defined in the world frame. However, the wheel motion is described in the wheel coordinate system and can be projected into the robot coordinate system _RK (see red coordinate system) using the kinematic configuration.

Now, it should be obvious that the pose at t must be somehow a function of the wheel motion and the previous pose . In this section, we will derive functions mapping motion from the world coordinate system to the wheel coordinate system and vice versa.

Deriving the Change of Pose

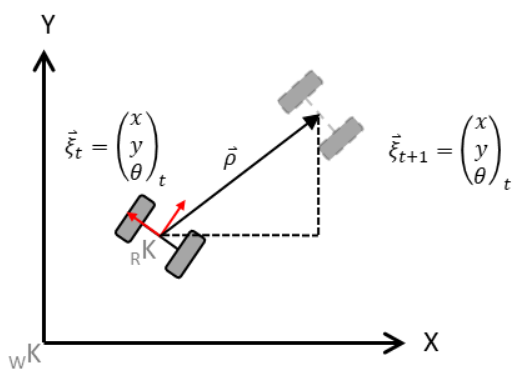

In this unit, we will only work with differential drive or holonomic mobile robots. Let us continue with the derivation of the change of pose to . Consider the following image.

Simplified Kinematic Model of the Mobile Robot

Thus we define the robot state and want to calculate the new state based on the motion of the left wheel and the right wheel .

As we can see, the robot will move meters. The X- and Y-components of have to be estimated based on the latest orientation of the robot (e.g. ) and the change of orientation. This change of the robot's orientation, as well as , are functions of the wheel motion.

The driven distance:

The change in orientation:

(Where B is the baseline — the distance between the wheels). Based on these calculations, the movement of the robot can be determined. For this calculation, we must know the previous pose . Accumulating the robot's motion leads to the new pose. To calculate this, we "split" the motion into the X and Y components.

This is simply done by using sine and cosine:

However, the equation has a shortcoming: it assumes a linear movement of the robot, but it is sufficient for our purposes. A more exact solution will be discussed in a different unit.

Forward Kinematics in ROS

Now that we know how to calculate the forward kinematics of a differential drive mobile robot, let's see how we implement it using ROS. (Remember that we already moved the TurtleBot in the previous unit in the move_turtlebot.py file).

In the following example, we will create a node that moves the robot to a specific pose referenced to the starting pose of the robot:

Simple Goal Mover in ROS

In this example, we'll implement a simple goal mover using ROS. The robot will move to a specified position in a simulated Gazebo environment.

#!/usr/bin/python3

import rospy

from nav_msgs.msg import Odometry

from tf.transformations import euler_from_quaternion

from geometry_msgs.msg import Point, Twist

from math import atan2, sqrt, hypot

class SimplePose:

def __init__(self):

"""Initializes the class member variables"""

self.x = 0.0

self.y = 0.0

self.theta = 0.0

self.goal_tolerance = 0.2

self.sub = rospy.Subscriber("/odom", Odometry, self.odom_callback)

self.pub = rospy.Publisher("/cmd_vel", Twist, queue_size=1)

# Waits for a message on the /odom topic to ensure the simulation has started

rospy.wait_for_message("/odom", Odometry, 10)

def odom_callback(self, msg):

"""Callback function to update the robot's position based on odometry data"""

self.x = msg.pose.pose.position.x

self.y = msg.pose.pose.position.y

rot_q = msg.pose.pose.orientation

(_, _, self.theta) = euler_from_quaternion([rot_q.x, rot_q.y, rot_q.z, rot_q.w])

def go_to(self, goal_point):

"""Moves the robot towards the goal point"""

speed = Twist()

rho = float('inf') # Initially set to a very large number

while rho > self.goal_tolerance:

delta_x = goal_point.x - self.x

delta_y = goal_point.y - self.y

rho = sqrt(delta_x ** 2 + delta_y ** 2)

rospy.loginfo_throttle_identical(1, f"Distance to goal= {rho:.2f}m")

angle_to_goal = atan2(delta_y, delta_x)

if abs(angle_to_goal - self.theta) > 0.1:

speed.linear.x = 0.0

speed.angular.z = 0.3

else:

speed.linear.x = 0.22

speed.angular.z = 0.0

self.pub.publish(speed)

rospy.sleep(0.01)

def stop_robot(self):

"""Stops the robot's movement"""

speed = Twist()

speed.linear.x = 0.0

speed.angular.z = 0.0

self.pub.publish(speed)

if __name__ == '__main__':

rospy.init_node("speed_controller")

simple_pose_mover = SimplePose()

goal = Point()

goal.x = 5

goal.y = 5

try:

simple_pose_mover.go_to(goal)

except (KeyboardInterrupt, rospy.ROSException) as e:

rospy.logerr(e)

finally:

simple_pose_mover.stop_robot()

position_error = hypot(goal.x - simple_pose_mover.x, goal.y - simple_pose_mover.y)

rospy.loginfo(f"Final position error: {position_error:.2f}m")Explanation

- Class Initialization: We define the

SimplePoseclass, which initializes subscribers and publishers for the/odomand/cmd_veltopics. - Odometry Callback: This method updates the robot's current position (

x,y, andtheta) using the odometry data. - Move to Goal: In the

go_tomethod, we calculate the distance (rho) and angle to the goal. If the robot is not facing the correct direction, it will rotate until aligned, then move straight. - Stopping the Robot: After reaching the goal, the robot stops.

Exercise [10 min]

Create a launch file with the name simulation2.launch that:

- spawns the turtlebot3 burger in an empty gazebo world at position x=0, y=0

- starts all TFs for the turtlebot3 burger

- starts the just now created simple_pose.py and try to understand the code

- comment the functions in the simple_pose.py so that you understand what is happening

Exercise Solution

We can launch the TurtleBot in Gazebo and test this script using the following launch file simulation2.launch.

<launch>

<include file="$(find turtlebot3_gazebo)/launch/turtlebot3_empty_world.launch">

<arg name="model" value="burger"/>

<arg name="x_pos" value="0.0"/>

<arg name="y_pos" value="0.0"/>

</include>

<include file="$(find turtlebot3_bringup)/launch/turtlebot3_remote.launch"/>

<node name="simple_pose" pkg="first_pkg" type="simple_pose.py" output="screen"/>

</launch>Discussion As you probably have noticed the developed code is not realy working well. Let's change this by implementing a direct controller based on the angle and distance to the goal. For this update the init function and the go_to functions as depicted below:

def __init__(self):

"""Initializes the class member variables

"""

self.x = 0.0

self.y = 0.0

self.theta = 0.0

self.goal_tolerance = 0.02

self.max_vel=0.22

self.max_omega = 2.84

self.k_rho = 0.3

self.k_alpha = 0.8

self.sub = rospy.Subscriber("/odom", Odometry, self.odom_callback)

self.pub = rospy.Publisher("/cmd_vel", Twist, queue_size=1)

# we wait for a message on the odom topic to make sure the simulation is started

rospy.wait_for_message("/odom", Odometry, 10)

def go_to(self, goal_point):

"""Calculates the current distance (rho) to the goal_point as well as the angle to the goal.

Based on the delta angle to the goal this function decides wether to rotate the robot in place or go straight ahead.

Args:

goal_point (Point): [description]

"""

speed: Twist = Twist()

rho = 999999999999999999

while rho > self.goal_tolerance:

delta_x = goal_point.x - self.x

delta_y = goal_point.y - self.y

rho = sqrt(delta_x**2 + delta_y**2)

rospy.loginfo_throttle_identical(1, "Distance to goal= {}m".format(rho))

angle_to_goal = atan2(delta_y, delta_x)

alpha = angle_to_goal - self.theta

v = self.k_rho * rho

omega = self.k_alpha * alpha

speed.linear.x = v if v <= self.max_vel else self.max_vel

speed.angular.z = omega if omega <= self.max_omega else self.max_omega

self.pub.publish(speed)

rospy.sleep(0.01)Mapping

Mapping describes the process of generating a useable map. In ROS this map is described using two files:

- An image: The discretized map is described using pixels. A white pixel is free, a gray is unknown and a black is occupied

- A yaml description file: This file describes the maps hyperparameters such as square meters per pixel

In this unit, we use the gmapping algorithm. This piece of software subscribes to two topics:

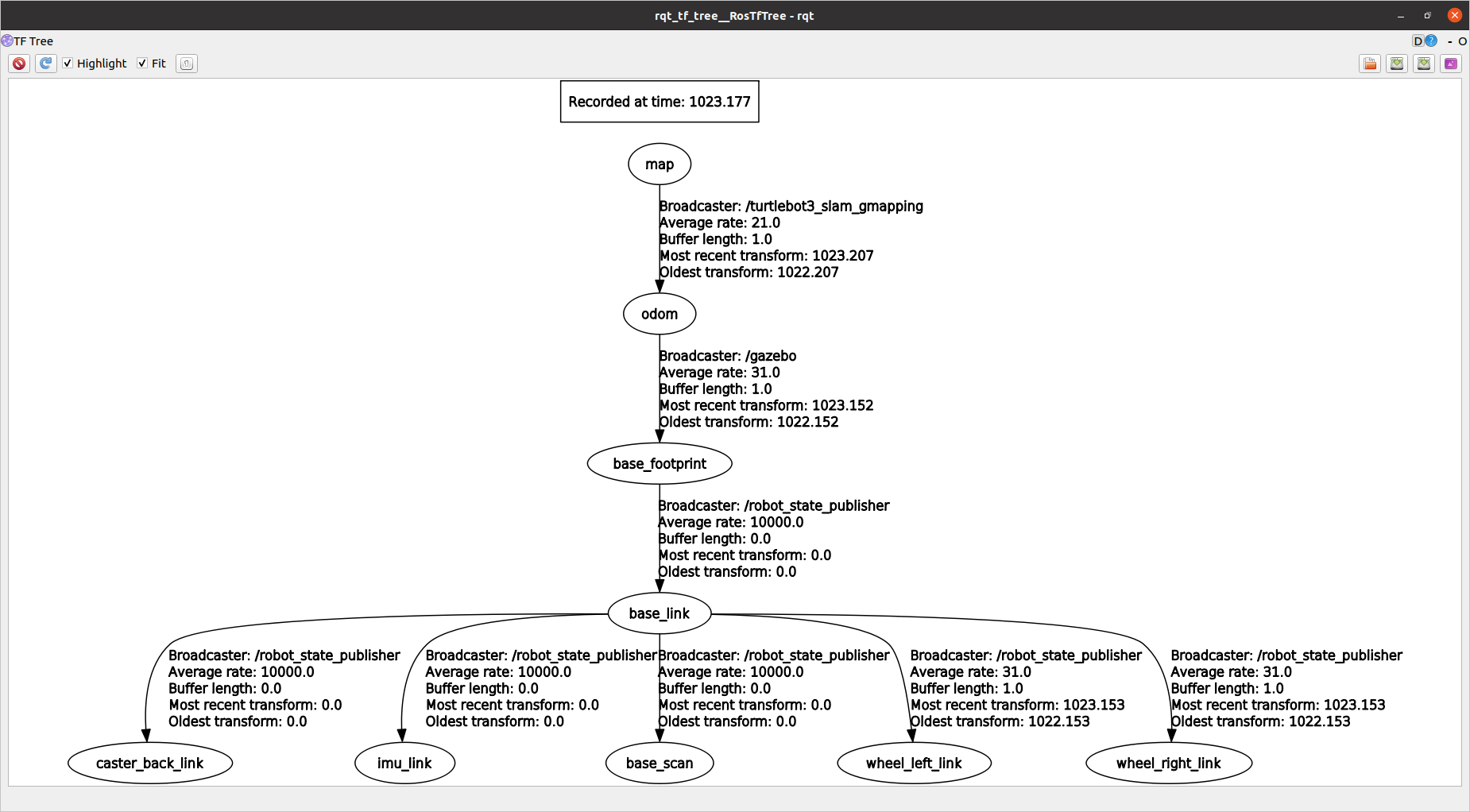

Obviously, the gmapping ROS node needs the topic of the laserscaner. Typically, your robot will publish a "\scan_description>\scan" (e.g.: "\front_scan\scan") topic. Since gmapping waits for the "\scan" topic, you have to remap. As you already know, the TF in ROS is used to coordinate the coordinate systems. To be able to transform the laserscan data to the robot's current pose, the TF tree must contain a complete description of the robot's joints as well as the sensor coordinate systems. Additionally, a TF from a world coordinate system to the current robot pose (typically named "\odom") must exist.

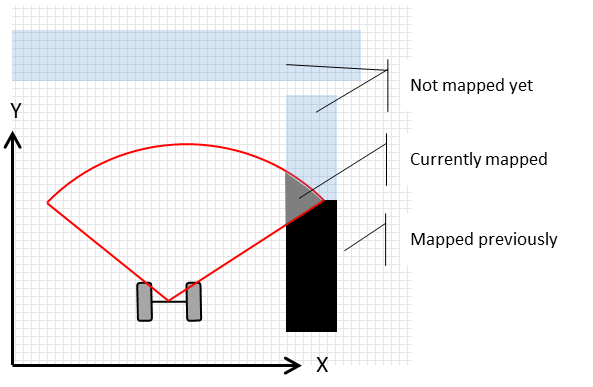

After the topics of gmapping were remapped, the node publishes a map. The default hyperparameters of the algorithm are typically good enough for our purpose. Initially, the map will look like the following sketch:

The map will be created during manual robot movement (of course, there are solutions for autonomous map creation). Depending on your system, a ROS keyboard control is enought for this task.The map will be created during manual robot movement (though autonomous solutions for map creation also exist). Depending on your system, ROS keyboard control may be enough for this task.

Additional Information

As mentioned previously, modern mobile robotics heavily relies on statistics. The gmapping ROS package is a SLAM (Simultaneous Localization and Mapping) application. In SLAM, we estimate both the current robot pose and the map . This estimation is based on the knowledge gathered so far — movement commands and measurements . This is summarized by the probability distribution:

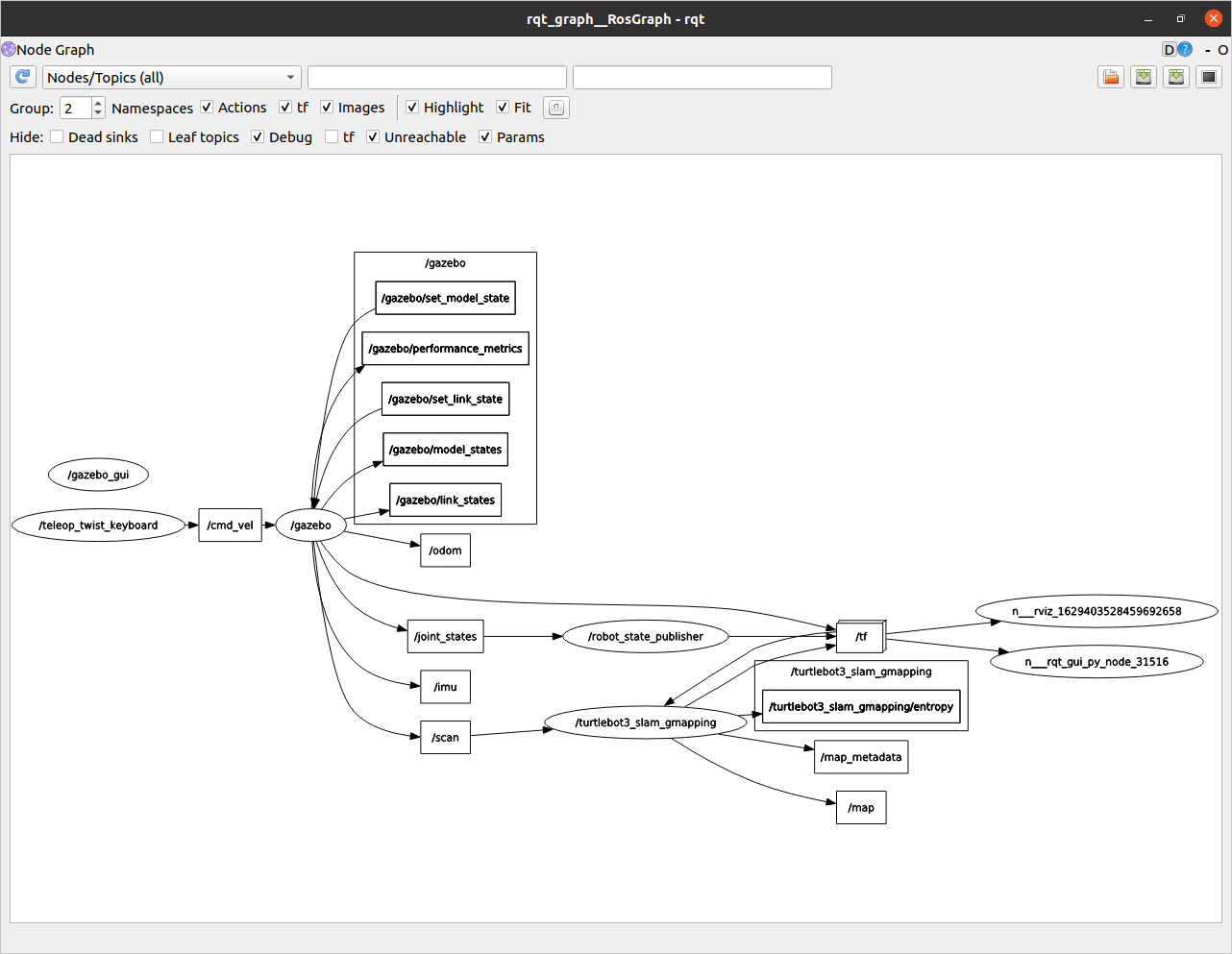

Gmapping Demo

We will use the existing TurtleBot3 simulation in Gazebo provided by ROBOTIS. Execute the following commands in separate terminals:

Start the simulation in Gazebo and spawns the robot in the world

roslaunch turtlebot3_gazebo turtlebot3_world.launch Starts the gmapping-based SLAM for the TurtleBot3. We use a preconfiguerd launch file wich inclues a preconfigured rviz file. However you can also click together your own vizualization in rviz.

roslaunch turtlebot3_slam turtlebot3_slam.launch slam_methods:=gmappingNow drive the robot around to create the map. To enable teleoperation, you can run the following command:

roslaunch turtlebot3_teleop turtlebot3_teleop_key.launchAlternatively, you could use rqt_robot_steering for control.

rosrun rqt_robot_steering rqt_robot_steeringLet's save our map in a folder named maps inside our package first_pkg with the name my_map. Make a foder called maps in the first_pkg package:

mkdir -p $(rospack find first_pkg)/mapsMove to the home directory:

cd Give the user permission to the folders:

sudo chown -R fhtw_user catkin_wsrosrun map_server map_saver -f "$(rospack find first_pkg)/maps/my_map"Exercise [5 min]

- Move the robot using any teleoperation method we've discussed so far.

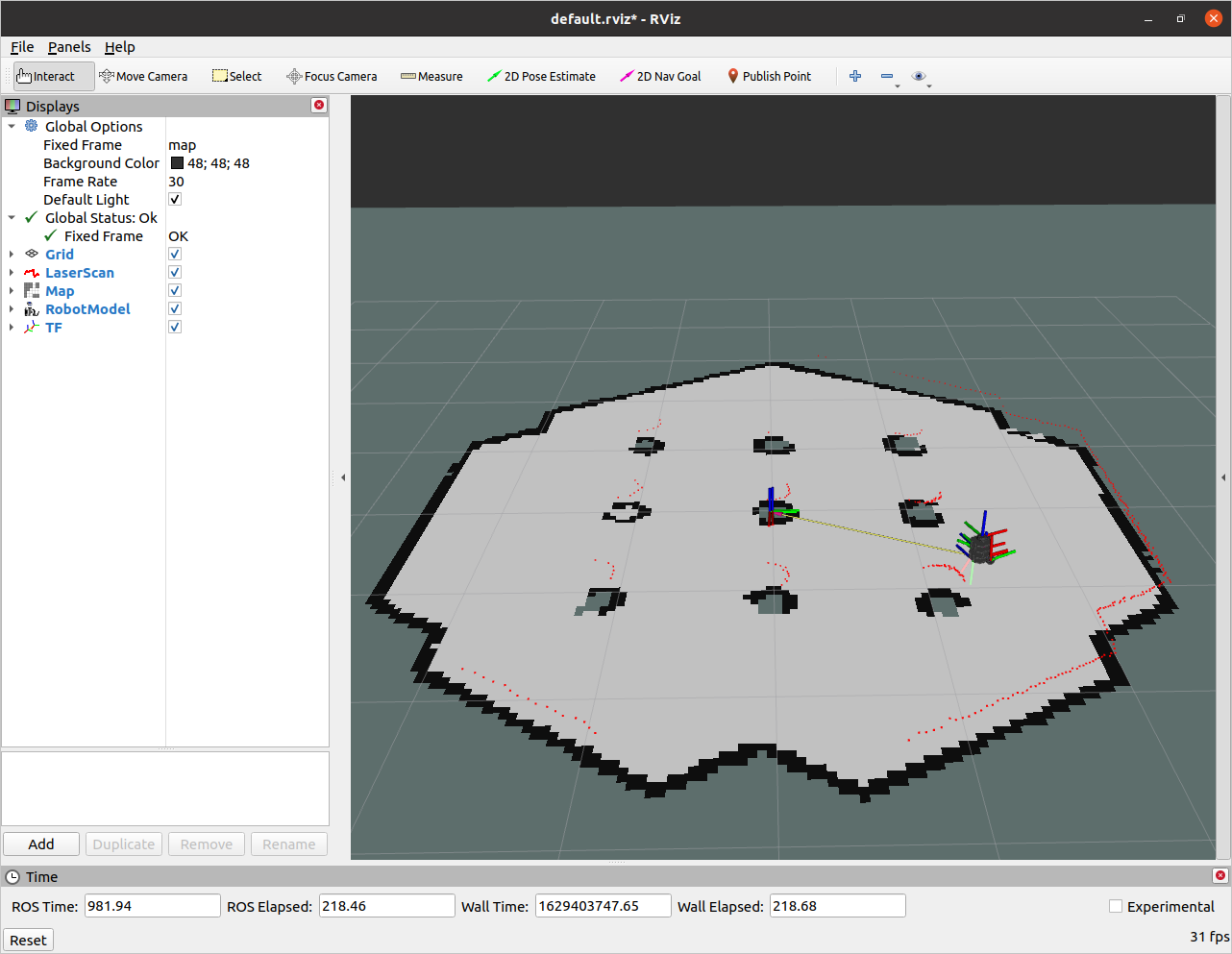

- Inspect the TF tree and rqt_graph. What do you observe?

- Create a complete map of the environment.

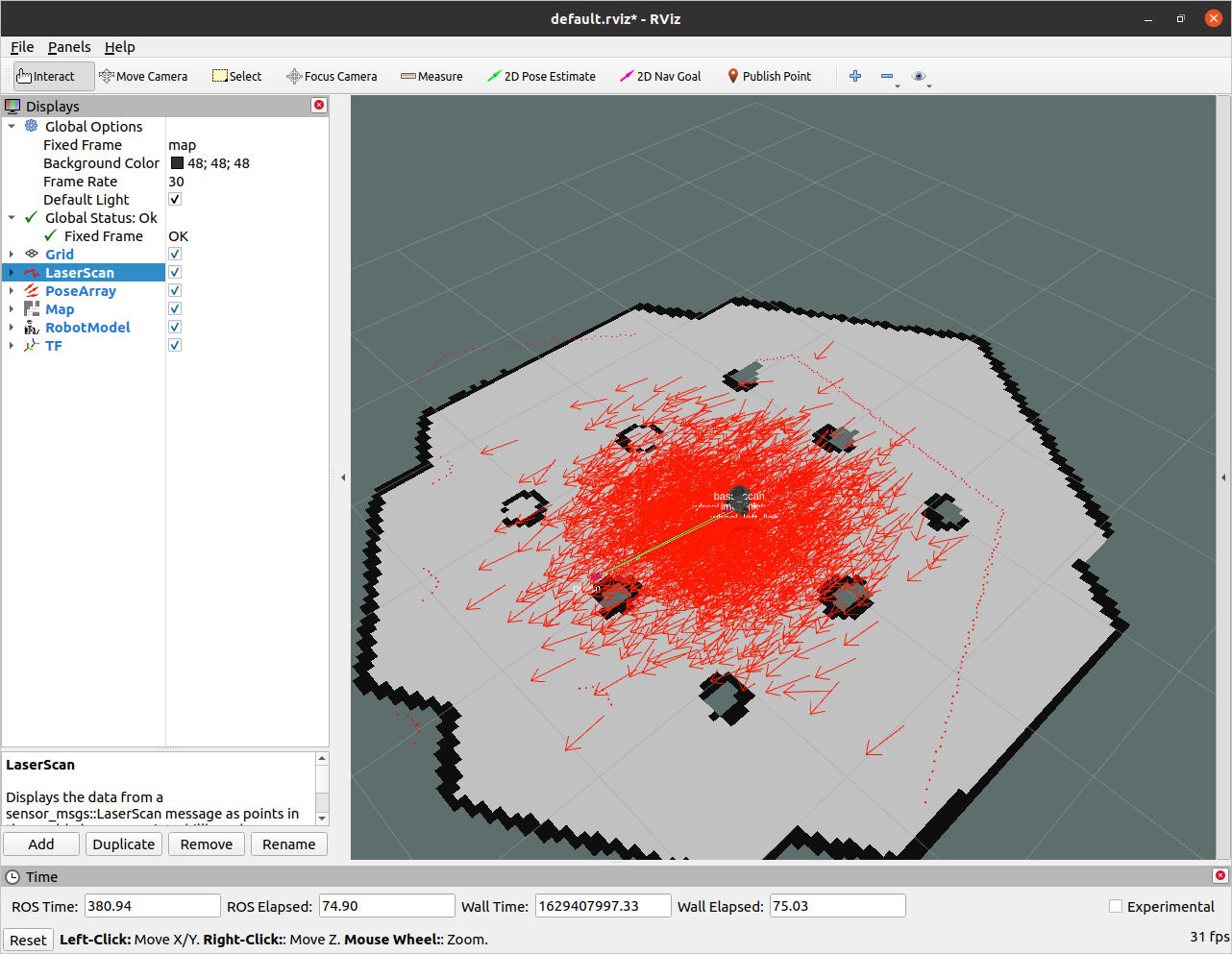

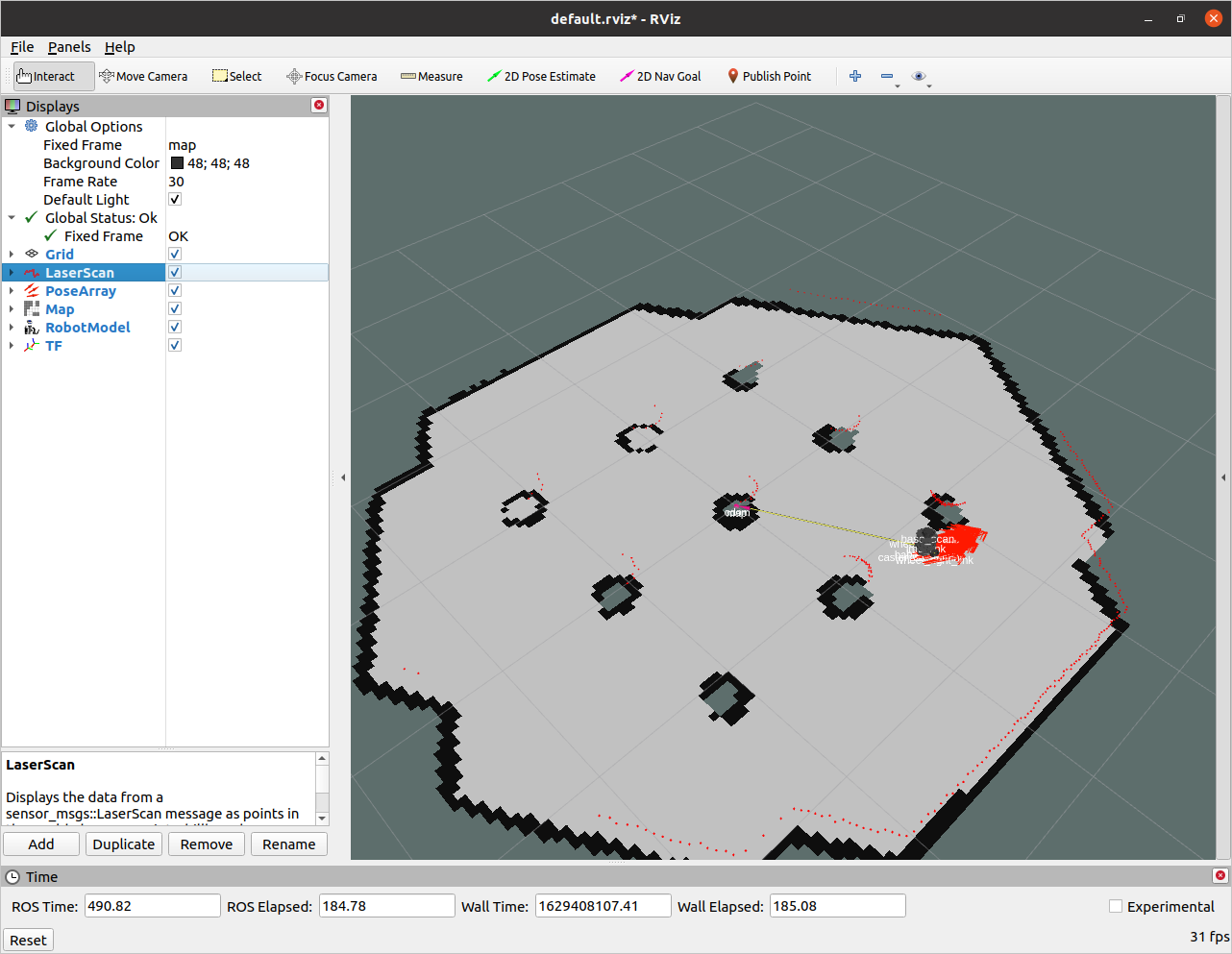

- Open RVIz and visualize the following:

- Robot model

- TFs (without names)

- Laser scan data

- The map created by gmapping

Refer to the following figures for the desired output in RVIz.

Now that we know how to create a map, let's look into how to save it for later.

Map Server

The map_server provides utilities to create the YAML and image file.

The syntax is:

rosrun map_server map_saver [--occ <threshold_occupied>] [--free <threshold_free>] [-f <mapname>] map:=/your/costmap/topicExercise [1 min]

- Inspect the generated YAML file.

Exercise Solution

cat $(rospack find first_pkg)/maps/my_map.yamlimage: /home/username/catkin_ws/src/JupyterROS/Basic_ROSpy/first_pkg/maps/unit3_map.pgm

resolution: 0.050000

origin: [-10.000000, -10.000000, 0.000000]

negate: 0

occupied_thresh: 0.65

free_thresh: 0.196Localization

Localization Using AMCL

You have already learned the principles of mobile robot localization using wheel motion and kinematics. We have also discussed the issue of noisy data, but we have not found a full solution for the process and sensor noise. Without delving into deeper topics (which are part of the master degree program "Master in Robot Engineering"), we can formulate the main problem: it is not possible to describe robot motion and the robot's state using classic statistical models, such as multivariate Gaussian distributions.

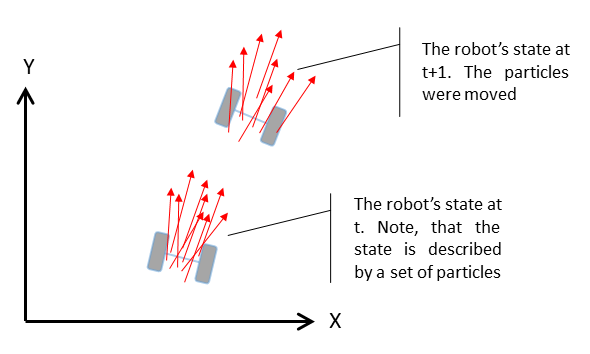

Instead of using parametric probability density functions to describe the robot's state and motion, we can use a technique from statistical physics called Markov Chain Monte Carlo (MCMC). MCMC uses many (typically more than 500) samples or potential hypotheses of the robot's state, referred to as "particles". This approach is known as a particle filter. The method can be simplified into two main steps:

-

Sampling: Each particle is moved according to a noisy motion command. For example, using noisy motion data, we estimate each particle's movement using kinematics. Each particle moves in accordance with the real physical movement. Next, we evaluate each particle using sensor signals (e.g., checking if the motion is consistent with sensor values, like those from an accelerometer) in a probabilistic framework. This results in a set of moved particles, each weighted by the sensor data.

-

Resampling: Imagine you have a bag of colored balls — two red and eight blue. The probability of drawing a red ball is 20%. If you sample "with replacement" (putting the ball back after each draw), you should draw a red ball 20% of the time. In resampling, particles are selected based on their weight (better particles are sampled more often). This creates a "survival of the fittest" scenario, where the best hypothesis of the robot's state survives.

The following figure illustrates a particle filter application for robot localization. Each arrow represents a particle, with the starting point representing the robot's position and the arrow's direction indicating orientation (length is not significant).

Sketch of Particle Filter in ROS

The particle filter is the default localization algorithm. As shown in the figure above, particles spread further from the "ground truth" over time. To correct this, global information (e.g., maps) is used.

References:

- C.M. Bishop; Pattern Recognition and Machine Learning; Springer, 2006

- S. Thrun, W. Burgard & D. Fox; Probabilistic Robotics (Intelligent Robotics and Autonomous Agents series); The MIT Press, 2005

AMCL Demo

We will now use the AMCL particle filter with the map we created. Start everything except for the mapping:

Starts simulation in Gazebo with the robot inside

roslaunch turtlebot3_gazebo turtlebot3_world.launchStarts the navigation stack with the created map passed in.

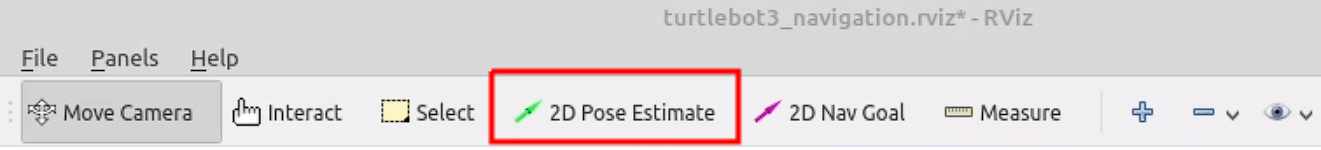

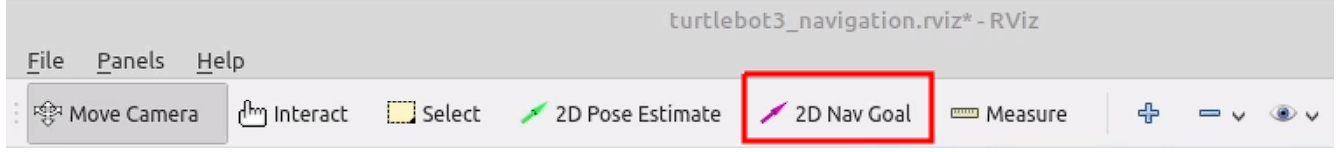

roslaunch turtlebot3_navigation turtlebot3_navigation.launch map_file:=$(rospack find first_pkg)/maps/my_map.yamlAt the beginning the robot doesn't know where it is. You can help the robot by setting an initial pose estimate in RViz. This is done by clicking the "2D Pose Estimate" button and dragging the arrow where the robot actually is.

Now the robot will localize itself. Now let the robot do the work for you. You can use the 2D Nav Goal button in RViz to set a goal for the robot. The robot will move to the goal and localize itself better with each resampling step.

AMCL Launchfile

The AMCL Demo was very TurtleBot specific. However you can configure everything by yourself:

Make a rviz folder in the first_pkg package:

mkdir -p $(rospack find first_pkg)/rvizCreate a new file called rviz_config.rviz in the rviz folder:

touch $(rospack find first_pkg)/rviz/rviz_config.rvizIn RViz, display the following:

- Robot Model

- Laser Scan

- TFs (without names)

- map

- PoseArray

Make a new launchfile called amcl.launch in the first_pkg package:

touch $(rospack find first_pkg)/launch/amcl.launchPaste the following code into the launch file:

<launch>

<!-- Start Gazebo with Turtlebot3 -->

<!--launch world-->

<include file="$(find gazebo_ros)/launch/empty_world.launch">

<arg name="world_name" value="$(find turtlebot3_gazebo)/worlds/turtlebot3_world.world"/>

<arg name="paused" value="false" />

<arg name="use_sim_time" value="true" />

<arg name="gui" value="false" />

</include>

<!--find robot description-->

<param name="robot_description" command="$(find xacro)/xacro --inorder $(find turtlebot3_description)/urdf/turtlebot3_burger.urdf.xacro" />

<!--spawn robot-->

<node name="spawn_urdf" pkg="gazebo_ros" type="spawn_model" args="-urdf -model turtlebot3_burger -x -2 -y 0 -z 0 -param robot_description" />

<!--launch remote-->

<include file="$(find turtlebot3_bringup)/launch/turtlebot3_remote.launch"/>

<!--start map server-->

<node name="map_server" pkg="map_server" type="map_server" args="$(find first_pkg)/maps/my_map.yaml"/>

<!-- Include Turtlebot movebase -->

<include file = "$(find turtlebot3_navigation)/launch/move_base.launch" />

<!-- Start RViz with a specific config -->

<node pkg="rviz" type="rviz" name="rviz" args="-d $(find first_pkg)/rviz/rviz_config.rviz" />

</launch>Now launch the file:

roslaunch first_pkg amcl.launchIf you choose Fixed Frame: base_footprint in RViz, you will see the robot's pose.

You may have noticed that the map is somewehere else.

That's becasue the robot doesn't know where it is and there is No transform from [map] to [base_footprint].

You could calculate that with a fixed Transformation, but there is a better way. We will now use the AMCL algorithm to localize the robot.

Add the AMCL node to the amcl.launch file:

<launch>

<!-- Start Gazebo with Turtlebot3 -->

<!--launch world-->

<include file="$(find gazebo_ros)/launch/empty_world.launch">

<arg name="world_name" value="$(find turtlebot3_gazebo)/worlds/turtlebot3_world.world"/>

<arg name="paused" value="false" />

<arg name="use_sim_time" value="true" />

<arg name="gui" value="false" />

</include>

<!--find robot description-->

<param name="robot_description" command="$(find xacro)/xacro --inorder $(find turtlebot3_description)/urdf/turtlebot3_burger.urdf.xacro" />

<!--spawn robot-->

<node name="spawn_urdf" pkg="gazebo_ros" type="spawn_model" args="-urdf -model turtlebot3_burger -x -2 -y 0 -z 0 -param robot_description" />

<!--launch remote-->

<include file="$(find turtlebot3_bringup)/launch/turtlebot3_remote.launch"/>

<!--start map server-->

<node name="map_server" pkg="map_server" type="map_server" args="$(find first_pkg)/maps/my_map.yaml"/>

<!-- Include Turtlebot movebase -->

<include file = "$(find turtlebot3_navigation)/launch/move_base.launch" />

<!-- Start RViz with a specific config -->

<node pkg="rviz" type="rviz" name="rviz" args="-d $(find first_pkg)/rviz/rviz_config.rviz" />

<!-- AMCL Configuration -->

<node pkg="amcl" type="amcl" name="my_amcl_node">

<param name="min_particles" value="200"/>

<param name="max_particles" value="1000"/>

<param name="kld_err" value="0.02"/>

<param name="update_min_d" value="0.20"/>

<param name="update_min_a" value="0.20"/>

<param name="resample_interval" value="1"/>

<param name="transform_tolerance" value="0.5"/>

<param name="recovery_alpha_slow" value="0.00"/>

<param name="recovery_alpha_fast" value="0.00"/>

<param name="initial_pose_x" value="-1.5"/>

<param name="initial_pose_y" value="0.5"/>

<param name="initial_pose_a" value="0"/>

<param name="gui_publish_rate" value="50.0"/>

<remap from="scan" to="scan"/>

<param name="laser_max_range" value="3.5"/>

<param name="laser_max_beams" value="180"/>

<param name="laser_z_hit" value="0.5"/>

<param name="laser_z_short" value="0.05"/>

<param name="laser_z_max" value="0.05"/>

<param name="laser_z_rand" value="0.5"/>

<param name="laser_sigma_hit" value="0.2"/>

<param name="laser_lambda_short" value="0.1"/>

<param name="laser_likelihood_max_dist" value="2.0"/>

<param name="laser_model_type" value="likelihood_field"/>

<param name="odom_model_type" value="diff"/>

<param name="odom_alphal" value="0.1"/>

<param name="odom_alpha2" value="0.1"/>

<param name="odom_alpha3" value="0.1"/>

<param name="odom_alpha4" value="0.1"/>

<param name="odom_frame_id" value="odom"/>

<param name="base_frame_id" value="base_footprint"/>

</node>

</launch>Now let the robot drive to a goal. The robot will move to the goal and localize itself better with each resampling step.

Exercise Solution

Visualization of Advanced Monte Carlo Localization

If you you can't manage to set up rviz correctly, you can download the premade rviz config file:

Download rviz_config.yamlHomework [10 Points]

Create a launch file unit3_hw.launch inside the first_pkg that:

- Spawns the TurtleBot3 Burger in an empty world.

- Spawns the TF coordinate transformations for the TurtleBot3 Burger.

- Starts the Python file

unit3_hw.py.

Create a Python file unit3_hw.py inside first_pkg that:

- Makes the robot drive to goal points, each containing the coordinates , where .

- Ensures that the robot stops at the final goal point.